WEEKLY AI NEWS: RESEARCH, NEWS, RESOURCES, AND PERSPECTIVES

AI & ML news: Week 8–14 April

Google, Mistral releases new models, Apple’s layoff the autonomous car engineers

The most interesting news, repository, articles, and resources of the week

Check and star this repository where the news will be collected and indexed:

You will find the news first in GitHub. Single posts are also collected here:

Research

- Smartphone app could help detect early-onset dementia cause, study finds. App-based cognitive tests were found to be proficient at detecting frontotemporal dementia in those most at risk. Scientists have demonstrated that cognitive tests done via a smartphone app are at least as sensitive to detecting early signs of frontotemporal dementia in people with a genetic predisposition to the condition as medical evaluations performed in clinics.

- Unsegment Anything by Simulating Deformation. A novel strategy called “Anything Unsegmentable” aims to prevent digital photos from being divided into discrete categories by potent AI models, potentially resolving copyright and privacy concerns.

- Evaluating LLMs at Detecting Errors in LLM Responses. A benchmark called ReaLMistake has been introduced by researchers to methodically identify mistakes in lengthy language model answers.

- Dynamic Prompt Optimizing for Text-to-Image Generation. Researchers have created Prompt Auto-Editing (PAE), a technique that uses diffusion models such as Imagen and Stable Diffusion to advance text-to-image generation. With the use of online reinforcement learning, this novel method dynamically modifies the weights and injection timings of particular words to automatically improve text prompts.

- No Time to Train: Empowering Non-Parametric Networks for Few-shot 3D Scene Segmentation. A system called Seg-NN simplifies the 3D segmentation procedure. These models don’t have the usual domain gap problems and can quickly adapt to new, unseen classes because they don’t require a lot of pre-training.

- Foundation Model for Advancing Healthcare: Challenges, Opportunities, and Future Directions. The potential of Healthcare Foundation Models (HFMs) to transform medical services is examined in this extensive survey. These models are well-suited to adapt to different healthcare activities since they have been pre-trained on a variety of data sets. This could lead to an improvement in intelligent healthcare services in a variety of scenarios.

- SwapAnything: Enabling Arbitrary Object Swapping in Personalized Visual Editing. A new algorithm called SwapAnything may swap out objects in an image with other objects of your choosing without affecting the image’s overall composition. Compared to other tools, it is superior since it can replace any object, not only the focal point, and it excels at ensuring that the replaced object blends seamlessly into the original image. Pretrained diffusion models, idea vectors, and inversion are employed.

- UniFL: Improve Stable Diffusion via Unified Feedback Learning. UniFL is a technique that uses a pretty complex cascade of feedback steps to enhance the output quality of diffusion models. All of these help to raise the image generation models’ aesthetics, preference alignment, and visual quality. The methods can be applied to enhance any image generating model, regardless of the underlying model

- .Object-Aware Domain Generalization for Object Detection. In order to tackle the problem of object detection in single-domain generalization (S-DG), the novel OA-DG approach presents two new techniques: OA-Mix for data augmentation and OA-Loss for training.

- VAR: a new visual generation method elevates GPT-style models beyond diffusion🚀 & Scaling laws observed. Code for the latest “next-resolution prediction” project, which presents the process of creating images as a progressive prediction of progressively higher resolution. A demo notebook and inference scripts are included in the repository. Soon, the training code will be made available.

- SqueezeAttention: 2D Management of KV-Cache in LLM Inference via Layer-wise Optimal Budget. SqueezeAttention is a newly developed technique that optimizes the Key-Value cache of big language models, resulting in a 30% to 70% reduction in memory usage and a doubling of throughput.

- Measuring the Persuasiveness of Language Models. The Claude 3 Opus AI model was shown to closely resemble human persuasiveness in a study that looked at persuasiveness. Statistical tests and multiple comparison adjustments were used to ascertain this. Although not by a statistically significant amount, humans were marginally more convincing, highlighting a trend where larger, more complex models are becoming more credible. The most persuasive model was found to be Claude 3 Opus. The study’s methodological reliability was validated by a control condition that demonstrated predictable low persuasiveness for undisputed facts.

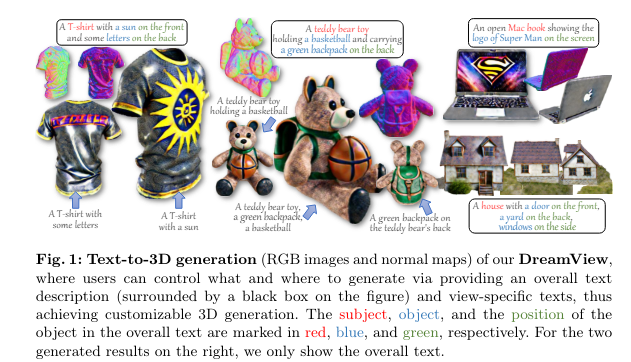

- DreamView: Injecting View-specific Text Guidance into Text-to-3D Generation. DreamView presents a novel method for turning text descriptions into 3D objects that may be extensively customized from various angles while maintaining the object’s overall consistency.

- Hash3D: Training-free Acceleration for 3D Generation. By adopting a hashing algorithm that takes the use of feature-map redundancy across similar camera positions and diffusion time steps, Hash3D presents a revolutionary way to accelerate 3D generative modeling.

- MoCha-Stereo: Motif Channel Attention Network for Stereo Matching. An innovative method that keeps geometric structures that are sometimes lost in conventional stereo-matching techniques is the Motif Channel Attention Stereo Matching Network (MoCha-Stereo).

- Efficient and Generic Point Model for Lossless Point Cloud Attribute Compression. PoLoPCAC is a lossless point cloud attribute compression technique that combines excellent adaptability and great efficiency at different point cloud densities and scales.

- Scaling Multi-Camera 3D Object Detection through Weak-to-Strong Eliciting. In order to boost surround refinement in Multi-Camera 3D Object Detection (MC3D-Det), a field enhanced by bird’s-eye view technologies, this study introduces a weak-to-strong eliciting framework.

- InstantMesh: Efficient 3D Mesh Generation from a Single Image with Sparse-view Large Reconstruction Models. This project introduces InstantMesh, a framework with unparalleled quality and scalability that creates 3D meshes instantaneously from a single image.

- Exploring Concept Depth: How Large Language Models Acquire Knowledge at Different Layers? A recent study examined the ways in which different layers within huge language models understand distinct concepts. It was discovered that while more complicated tasks demand deeper processing, simpler tasks are handled by earlier layers.

- SplatPose & Detect: Pose-Agnostic 3D Anomaly Detection. SplatPose is a revolutionary approach that uses 3D Gaussian splatting to address the problem of anomaly identification in 3D objects from different positions.

News

- Facebook and Instagram to label digitally altered content ‘made with AI’. Parent company Meta also added ‘high-risk’ label to Al-altered content that deceives the public on “a matter of importance”

- Google considering charging for internet searches with AI, reports say. The cost of artificial intelligence services could mean leaders in the sector turning to subscription models

- Apple lays off 600 workers in California after shuttering self-driving car project. Tech company cuts employees from eight offices in Santa Clara in its first big wave of post-pandemic job cuts

- AMD to open source Micro Engine Scheduler firmware for Radeon GPUs. AMD plans to document and open source its Micro Engine Scheduler (MES) firmware for GPUs, giving users more control over Radeon graphics cards.

- Investors in talks to help Elon Musk’s xAI raise $3 billion: report. Investors close to Elon Musk are in talks to help his artificial-intelligence startup xAI raise $3 billion in a round that would value the company at $18 billion, the Wall Street Journal reported on Friday.

- Introducing Command R+: A Scalable LLM Built for Business. Command R+, a potent, scalable LLM with multilingual coverage in ten important languages and tool use capabilities, has been launched by Cohere. It is intended for use in enterprise use scenarios.

- Qwen1.5–32B: Fitting the Capstone of the Qwen1.5 Language Model Series. A growing consensus within the field now points to a model with approximately 30 billion parameters as the optimal “sweet spot” for achieving both strong performance and manageable resource requirements. In response to this trend, we are proud to unveil the latest additions to our Qwen1.5 language model series: Qwen1.5–32B and Qwen1.5–32B-Chat.

- Nvidia Tops Llama 2, Stable Diffusion Speed Trials . Now that we’re firmly in the age of massive generative AI, it’s time to add two such behemoths, Llama 2 70B and Stable Diffusion XL, to MLPerf’s inferencing tests. Version 4.0 of the benchmark tests more than 8,500 results from 23 submitting organizations. As has been the case from the beginning, computers with Nvidia GPUs came out on top, particularly those with its H200 processor. But AI accelerators from Intel and Qualcomm were in the mix as well.

- Rabbit partners with ElevenLabs to power voice commands on its device. Hardware maker Rabbit has tapped a partnership with ElevenLabs to power voice commands on its devices. Rabbit is set to ship the first set of r1 devices next month after getting a ton of attention at the Consumer Electronics Show (CES) at the start of the year.

- DALL-E now lets you edit images in ChatGPT.Tweak your AI creations without leaving the chat.

- Jony Ive and OpenAI’s Sam Altman Seeking Funding for Personal AI Device. OpenAI CEO Sam Altman and former Apple design chief Jony Ive have officially teamed up to design an AI-powered personal device and are seeking funding, reports The Information.

- Hugging Face TGI Reverts to Open Source License. Hugging Face temporarily granted a non-commercial license for their well-known and potent inference server in an effort to deter bigger companies from running a rival offering. While community involvement decreased, business outcomes remained unchanged. It is now back to a license that is more liberal.

- Securing Canada’s AI advantage. To support Canada’s AI industry, Prime Minister Justin Trudeau unveiled a $2.4 billion investment package beginning with Budget 2024. The package comprises tools to enable ethical AI adoption, support for AI start-ups, and financing for computational skills. These policies are intended to maintain Canada’s competitive advantage in AI globally, boost productivity, and hasten the growth of jobs. The money will also be used to fortify the Artificial Intelligence and Data Act’s enforcement as well as establish a Canadian AI Safety Institute.

- Yahoo is buying Artifact, the AI news app from the Instagram co-founders. Instagram’s co-founders built a powerful and useful tool for recommending news to readers — but could never quite get it to scale. Yahoo has hundreds of millions of readers — but could use a dose of tech-forward cool to separate it from all the internet’s other news aggregators.

- Now there’s an AI gas station with robot fry cooks. There’s a little-known hack in rural America: you can get the best fried food at the gas station (or in the case of a place I went to on my last road trip, shockingly good tikka masala). Now, one convenience store chain wants to change that with a robotic fry cook that it’s bringing to a place once inhabited by a person who may or may not smell like a recent smoke break and cooks up a mean fried chicken liver.

- Elon Musk predicts superhuman AI will be smarter than people next year. His claims come with a caveat that shortages of training chips and growing demand for power could limit plans in the near term

- Gemma Family Expands with Models Tailored for Developers and Researchers. Google announced the first round of additions to the Gemma family, expanding the possibilities for ML developers to innovate responsibly: CodeGemma for code completion and generation tasks as well as instruction following, and RecurrentGemma, an efficiency-optimized architecture for research experimentation.

- Meta confirms that its Llama 3 open source LLM is coming in the next month. At an event in London on Tuesday, Meta confirmed that it plans an initial release of Llama 3 — the next generation of its large language model used to power generative AI assistants — within the next month.

- Intel details Gaudi 3 at Vision 2024 — new AI accelerator sampling to partners now, volume production in Q3. Intel made a slew of announcements during its Vision 2024 event today, including deep-dive details of its new Gaudi 3 AI processors, which it claims offer up to 1.7X the training performance, 50% better inference, and 40% better efficiency than Nvidia’s market-leading H100 processors, but for significantly less money.

- Apple’s new AI model could help Siri see how iOS apps work. Apple’s Ferret LLM could help allow Siri to understand the layout of apps in an iPhone display, potentially increasing the capabilities of Apple’s digital assistant. Apple has been working on numerous machine learning and AI projects that it could tease at WWDC 2024. In a just-released paper, it now seems that some of that work has the potential for Siri to understand what apps and iOS itself looks like.

- Aerospace AI Hackathon Projects. Together, 200 AI and aerospace experts created an amazing array of tools, including AI flight planners, AI air traffic controllers, and Apple Vision Pro flight simulators, as a means of prototyping cutting-edge solutions for the aviation and space industries.

- AI race heats up as OpenAI, Google, and Mistral release new models. Launches within 12 hours of one another, and more activity is expected in the industry over the summer

- next-generation Meta Training and Inference Accelerator. The next iteration of Meta’s AI accelerator chip has been revealed. Its development was centered on throughput (11 TFLOPs at int8) and chip memory (128GB at 5nm).

- Google’s Gemini Pro 1.5 enters public preview on Vertex AI. Gemini 1.5 Pro, Google’s most capable generative AI model, is now available in public preview on Vertex AI, Google’s enterprise-focused AI development platform. The company announced the news during its annual Cloud Next conference, which is taking place in Las Vegas this week.

- Microsoft is working on sound recognition AI technologies capable of detecting natural disasters. However, the Redmond-based tech giant is working on performant sound recognition AI technologies that would see Copilot (and any other AI model, such as ChatGPT) capable of detecting upcoming natural disasters, such as earthquakes, and storms.

- Amazon scrambles for its place in the AI race. With its multibillion-dollar bet on Anthropic and its forthcoming Olympus model, Amazon is pushing hard to be a leader in AI.

- Elon Musk’s updated Grok AI claims to be better at coding and math. It’ll be available to early testers ‘in the coming days.’ Elon Musk’s answer to ChatGPT is getting an update to make it better at math, coding, and more. Musk’s xAI has launched Grok-1.5 to early testers with “improved capabilities and reasoning” and the ability to process longer contexts. The company claims it now stacks up against GPT-4, Gemini Pro 1.5, and Claude 3 Opus in several areas.

- Anthropic’s Haiku Beats GPT-4 Turbo in Tool Use — Sometimes. Anthropic’s beta tool use API is better than GPT-4 Turbo in 50% of cases on the Berkeley Function Calling benchmark.

- UK has real concerns about AI risks, says competition regulator. The concentration of power among just six big tech companies ‘could lead to winner-takes-all dynamics’

- The new bill would force AI companies to reveal use of copyrighted art. Adam Schiff introduces bill amid growing legal battle over whether major AI companies have made illegal use of copyrighted works

- Randomness in computation wins computer-science ‘Nobel’. Computer scientist Avi Wigderson is known for clarifying the role of randomness in algorithms, and for studying their complexity. A leader in the field of computational theory is the latest winner of the A. M. Turing Award, sometimes described as the ‘Nobel Prize’ of computer science.

- Introducing Rerank 3: A New Foundation Model for Efficient Enterprise Search & Retrieval. Rerank 3, the newest foundation model from Cohere, was developed with enterprise search and Retrieval Augmented Generation (RAG) systems in mind. The model may be integrated into any legacy program with built-in search functionality and is compatible with any database or search index. With a single line of code, Rerank 3 can improve search speed or lower the cost of running RAG applications with minimal effect on latency.

- Meta to broaden labeling of AI-made content. Meta admits its current labeling policies are “too narrow” and that a stronger system is needed to deal with today’s wider range of AI-generated content and other manipulated content, such as a January video that appeared to show President Biden inappropriately touching his granddaughter.

- Mistral’s New Model. The Mixtral-8x22B Large Language Model (LLM) is a pre-trained generative Sparse Mixture of Experts.

- Waymo self-driving cars are delivering Uber Eats orders for the first time. Uber Eats customers may now receive orders delivered by one of Waymo’s self-driving cars for the first time in the Phoenix metropolitan area. It is part of a multiyear collaboration between the two companies unveiled last year.

- JetMoE: Reaching LLaMA2 Performance with 0.1M Dollars. This model of a mixture of experts was trained on a decent amount of CPU power using available datasets. It performs on par with the considerably larger and more costly Meta Llama 2 7B variant.

- Google blocking links to California news outlets from search results. Tech giant is protesting proposed law that would require large online platforms to pay ‘journalism usage fee’

- House votes to reapprove law allowing warrantless surveillance of US citizens. Fisa allows for the monitoring of foreign communications, as well as a collection of citizens’ messages and calls

- Tesla settles lawsuit over 2018 fatal Autopilot crash of Apple engineer. Walter Huang was killed when his car steered into a highway barrier and Tesla will avoid questions about its technology in a trial

Resources

- swe agents. SWE-agent turns LMs (e.g. GPT-4) into software engineering agents that can fix bugs and issues in real GitHub repositories.

- Schedule-Free Learning.Faster training without schedules — no need to specify the stopping time/steps in advance!

- State-of-the-art Representation Fine-Tuning (ReFT) methods. ReFT is a novel approach to language model fine-tuning that is efficient with parameters. It achieves good performance at a significantly lower cost than even PeFT.

- The Top 100 AI for Work — April 2024. Following our AI Top 150, we spent the past few weeks analyzing data on the top AI platforms for work. This report shares key insights, including the AI tools you should consider adopting to work smarter, not harder.

- LLocalSearch. LLocalSearch is a completely locally running search aggregator using LLM Agents. The user can ask a question and the system will use a chain of LLMs to find the answer. The user can see the progress of the agents and the final answer. No OpenAI or Google API keys are needed.

- llm.c. LLM training in simple, pure C/CUDA. There is no need for 245MB of PyTorch or 107MB of CPython. For example, training GPT-2 (CPU, fp32) is ~1,000 lines of clean code in a single file. It compiles and runs instantly, and exactly matches the PyTorch reference implementation.

- AIOS: LLM Agent Operating System. AIOS, a Large Language Model (LLM) Agent operating system, embeds a large language model into Operating Systems (OS) as the brain of the OS, enabling an operating system “with soul” — an important step towards AGI. AIOS is designed to optimize resource allocation, facilitate context switch across agents, enable concurrent execution of agents, provide tool service for agents, maintain access control for agents, and provide a rich set of toolkits for LLM Agent developers.

- Anthropic Tool use (function calling). Claude AI may now communicate with customized client-side tools supplied in API requests thanks to the public beta that Anthropic has released. To utilize the feature, developers need to include the ‘anthropic-beta: tools-2024–04–04’ header. Provided that each tool has a comprehensive JSON structure, Claude’s capability can be expanded.

- Flyflow. Flyflow is API middleware to optimize LLM applications, same response quality, 5x lower latency, security, and much higher token limits

- ChemBench. LLMs gain importance across domains. To guide improvement, benchmarks have been developed. One of the most popular ones is BIG-bench which currently only includes two chemistry-related tasks. The goal of this project is to add more chemistry benchmark tasks in a BIG-bench-compatible way and develop a pipeline to benchmark frontier and open models.

- Longcontext Alpaca Training. On an H100, train more than 200k context windows using a new gradient accumulation offloading technique.

- attorch. attorch is a subset of PyTorch’s NN module, written purely in Python using OpenAI’s Triton. Its goal is to be an easily hackable, self-contained, and readable collection of neural network modules whilst maintaining or improving upon the efficiency of PyTorch.

- Policy-Guided Diffusion. A novel approach to agent training in offline environments is provided by policy-guided diffusion, which generates synthetic trajectories that closely match target policies and behavior. By producing more realistic training data, this method greatly enhances the performance of offline reinforcement learning models.

- Ada-LEval. Ada-LEval is a pioneering benchmark to assess the long-context capabilities with length-adaptable questions. It comprises two challenging tasks: TSort, which involves arranging text segments into the correct order, and BestAnswer, which requires choosing the best answer to a question among multiple candidates.

Perspectives

- “Time is running out”: can a future of undetectable deep-fakes be avoided? Tell-tale signs of generative AI images are disappearing as the technology improves, and experts are scrambling for new methods to counter disinformation

- Four Takeaways on the Race to Amass Data for A.I. To make artificial intelligence systems more powerful, tech companies need online data to feed the technology. Here’s what to know.

- TechScape: Could AI-generated content be dangerous for our health? From hyper realistic deep fakes to videos that not only hijack our attention but also our emotions, tech seems increasingly full of “cognito-hazards”

- AI can help to tailor drugs for Africa — but Africans should lead the way. Computational models that require very little data could transform biomedical and drug development research in Africa, as long as infrastructure, trained staff, and secure databases are available.

- Breaking news: Scaling will never get us to AGI. In order to create artificial general intelligence, additional methods must be used because neural networks’ poor capacity to generalize beyond their training data limits their reasoning and trustworthiness.

- Americans’ use of ChatGPT is ticking up, but few trust its election information. It’s been more than a year since ChatGPT’s public debut set the tech world abuzz. And Americans’ use of the chatbot is ticking up: 23% of U.S. adults say they have ever used it, according to a Pew Research Center survey conducted in February, up from 18% in July 2023.

- Can Demis Hassabis Save Google? Demis Hassabis, the founder of DeepMind, is currently in charge of Google’s unified AI research division and hopes to keep the tech behemoth ahead of the competition in the field with innovations like AlphaGo and AlphaFold. Notwithstanding the achievements, obstacles nonetheless exist in incorporating AI into physical goods and rivalry from organizations like OpenAI’s ChatGPT. Having made a substantial contribution to AI, Hassabis now has to work within Google’s product strategy in order to make use of DeepMind’s research breakthroughs.

- Is ChatGPT corrupting peer review? Telltale words hint at AI use. A study of review reports identifies dozens of adjectives that could indicate text written with the help of chatbots.

- AI-fuelled election campaigns are here — where are the rules? Political candidates are increasingly using AI-generated ‘softfakes’ to boost their campaigns. This raises deep ethical concerns.

- How to break big tech’s stranglehold on AI in academia. Deep-learning artificial intelligence (AI) models have become an attractive tool for researchers in many areas of science and medicine. However, the development of these models is prohibitively expensive, owing mainly to the energy consumed in training them.

- Ready or not, AI is coming to science education — and students have opinions. As educators debate whether it’s even possible to use AI safely in research and education, students are taking a role in shaping its responsible use.

- ‘Without these tools, I’d be lost’: how generative AI aids in accessibility. A rush to place barriers around the use of artificial intelligence in academia could disproportionately affect those who stand to benefit most.

Medium articles

A list of the Medium articles I have read and found the most interesting this week:

- Andy McDonald, Using Python to Explore and Understand Equations in Petrophysics, link

- Daniel Warfield, Groq, and the Hardware of AI — Intuitively and Exhaustively Explained, link

- Ignacio de Gregorio, JAMBA, the First Powerful Hybrid Model is Here, link

- Christopher Tao, How to Use Python Built-In Decoration to Improve Performance Significantly, link

- C.A. Exline, These are my top ten web browsers for Linux operating systems., link

- David C. Wyld, Brandolini’s Law, or the Bullshit Asymmetry Principle, link

- Mandar Karhade, MD. PhD., Google’s CodeGemma: I am not Impressed, link

- Rukshan Pramoditha, Data Preprocessing for K-Nearest Neighbors (KNN), link

- AI TutorMaster, VoiceCraft: AI-Powered Speech Editing and Crafting, link

- Matt Kornfield, What AI Does (and Doesn’t) Change About Intellectual Property, link

Meme of the week

What do you think about it? Some news that captured your attention? Let me know in the comments

If you have found this interesting:

You can look for my other articles, and you can also connect or reach me on LinkedIn. Check this repository containing weekly updated ML & AI news. I am open to collaborations and projects and you can reach me on LinkedIn. You can also subscribe for free to get notified when I publish a new story.

Here is the link to my GitHub repository, where I am collecting code and many resources related to machine learning, artificial intelligence, and more.

or you may be interested in one of my recent articles: